TUCSON, Ariz. (13 News) – The University of Arizona considers unauthorized use of artificial intelligence cheating, but the school has disabled AI detection features on its plagiarism software due to reliability concerns and false positives.

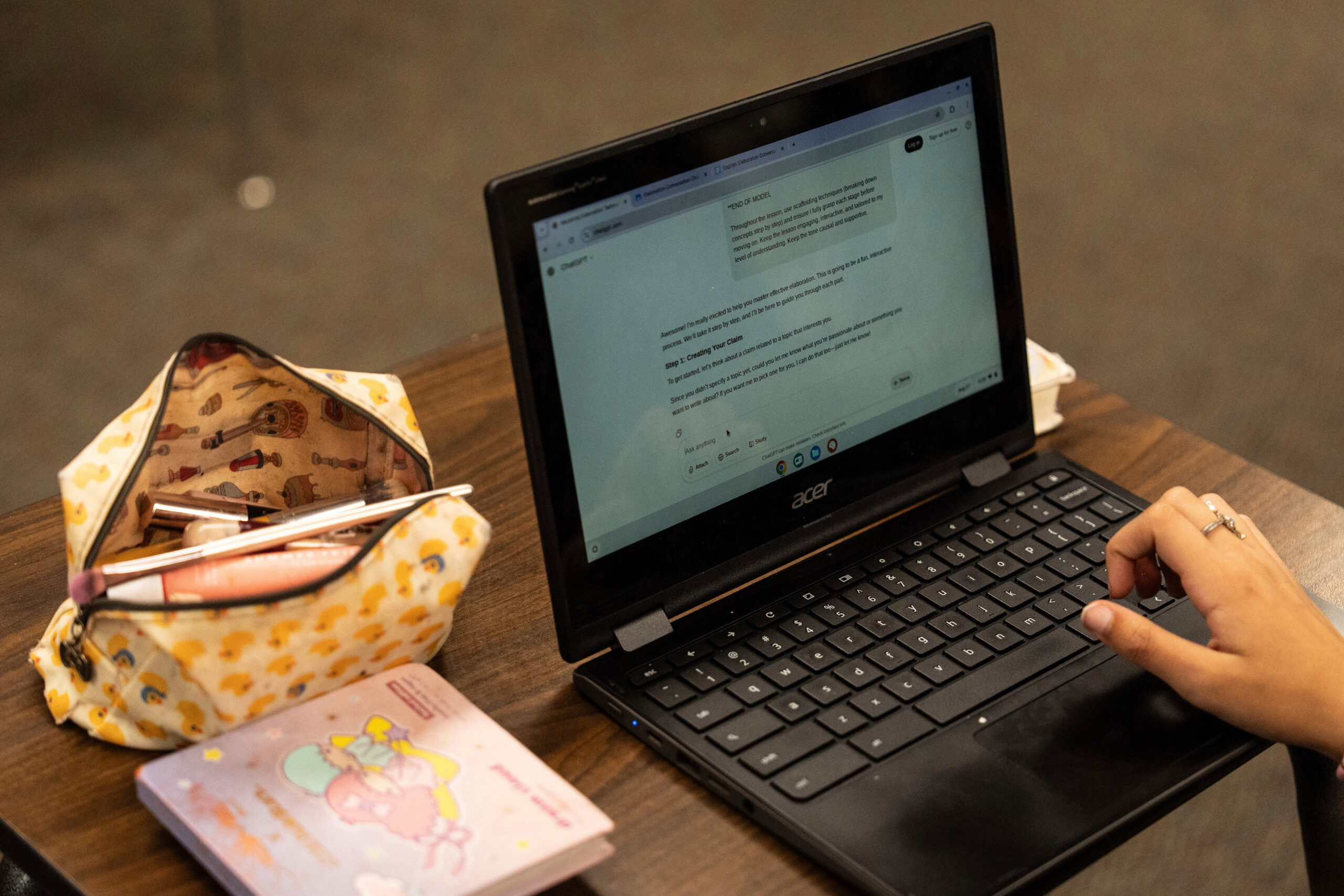

The policy creates a challenge for students and educators navigating learning and grading in an era where AI tools are increasingly accessible.

The university says every instructor needs their own AI policy communicated clearly to students, but clarity remains difficult on the subject.

RECOMMENDED FOR YOU

Associate Professor Steve Bethard, an expert in machine learning at the UA College of Information Science, said AI has changed how educators assess student effort.

“It has reduced our ability to judge effort. I can no longer say hey write me an essay and then I say oh someone who’s written put a bunch of effort in so now I can grade them based on that. No, because we know there are now models that can do it super cheap,” Bethard said.

Bethard is developing methods to use and grade around AI in classrooms. For some assignments, he prohibits AI tools and designs tasks where unauthorized use would be obvious.

“There are some assignments where I’ll say don’t use these tools and typically I design these assignments in such of way that if they do use those tools, it will be obvious to me that they have,” he said.

Creative detection methods emerge

Bethard uses techniques like embedding hidden prompts in assignments that would trigger AI responses with specific characteristics, such as turtle metaphors, making unauthorized AI use detectable when students copy responses directly.

In his courses, students are often encouraged to use AI for coding tasks as long as they cite the assistance, since AI skills will benefit their future careers.

High stakes for academic dishonesty

Unauthorized AI use at the University of Arizona and other schools, including area high schools, is treated as academic dishonesty and could result in failing grades, suspension, or expulsion.

Current AI detection software lacks the accuracy needed to serve as a standalone proof of cheating.

“You would need to provide evidence, and if your evidence was I ran one of these online checkers and it said there’s an 80 percent chance this is ChatGPT that’s not very good evidence,” Bethard said.

The detection tools cannot provide definitive results about whether students used AI assistance.

“They’re not 100% ‘Yes this student used it’ or 100% ‘No, they didn’t,’ so any decision based on them has to recognize that,” he said.

Students seek ways to prove original work

Students can run AI detection tools on their own work to demonstrate authenticity, Bethard suggested.

If multiple detection tools produce different results on student work, it could serve as evidence that the work was original, since the tools would likely agree if AI had been used.

Academic integrity cases still rely on traditional methods of evaluation, with AI detection having limited influence on final judgments.

“It’s a new world, and it’s exciting because they can do a lot of stuff, but it’s also scary because now we have to double-check everything,” Bethard said.

Universities are exploring contracts with AI companies to provide standardized models for all students, similar to how schools provide email accounts.

=======================================================

Are you streaming 13 News?

Watch a free live stream of Tucson Now and 13 News at TucsonNow.Live.

Be sure to download the free Tucson Now app, which you can find on Apple and Google.

You can submit your breaking news or weather images here.

Finished reading? There’s more to explore.

Leave a Reply